Click here to read an update on this project

This project has evolved into an operating system. I've written some more about what tha is all a bout, check out the link above.

Tl;dr: github.com/augustl/concui is a research project where I attempt to invent a 100% functional and multi-thread based user interface library. It's written in Clojure and making heavy use of it's STM, immutability, and persistent data structures.

The mutable object oriented UI

User interface programming is arguably one of the best fits for object orientation. Having a View object that is extended by Control which is extended by Button is very intuitive. When a button is clicked, an event is triggered by calling a method on the object representing it. Place oriented programming applies well to user interfaces, since you don't care about old values; a user interface is not an information system.

All user interface librares are mutable at their core. You have view objects. A view has children. A view can listen to events. Events bubble up the tree of views. This is identical in iOS, QT, Android, HTML5, Flex, and so on.

One essential limitation of having a mutable tree structure, is the fact that all UI frameworks also have (and needs to have) a single thread for performing UI changes and rendering. There's simply too much state to coordinate, and using locks and mutexes on tree structures can easily cause more waiting time than you want, so it's simply not done. We run blocking operations (such as the actual rendering itself) on the main UI thread instead.

Things like double buffering or multiple graphic cards are not getting around this limitation either. Double buffering means using two buffers to draw into for each render cycle, so that when the render cycle is over, the off-screen buffer that was rendered to is swapped with the on-screen buffer, causing instant instead of gradual updates to the screen for each frame. The next frame, the buffer that was on the screen but is now off it is used for rendering. Rinse and repeat. Triple buffering is similar; a third buffer is used to concurrently perform the buffer swap and start drawing the next frame. But we still only render one frame at a time. Then there's multiple graphic cards. They typically share the workload by rendering 50% of the scene (for example, the top and bottom half) for every frame. In other words: there's no frame level concurrency to be had in rendering operations, as an essential limitation in the mutability of the view hierarchy. One frame at a time, in sequence, that's it.

At a higher level, there's the operation you, the programmer, performs on the mutable UI tree. If you're in OO land, you typically don't take the time to do business logic unrelated to UI in a separate thread, you just do it in the main thread, since that's where it typically has to end up in order to change the UI. So you end up with the occasional blocked UI when your computer is busy and the JSON parsing takes a little longer than expected.

Even worse, when you do decide to do work in another thread, synchronizing it down to the main UI thread is your problem. There are few generic solutions out there, so you end up having to spawn threads and synchronize state by hand.

Functional at the low levels

Note: I'm using Android >= 3 as my reference here. I haven't investigated in detail how similar other UI libraries are to Android.

Every time a frame is drawn, 60 times per second, the entire screen is cleared and redrawn from scratch. This might sound a bit crazy to you. Is there really no caching?? It's important to understand that at the very lowest level, something needs to say which color a pixel should be. OpenGL is only slightly higher level than this, and OpenGL commands is what all UI frameworks boils down to in the end. (Good ones, at least. Android 1 and 2 used software, not OpenGL commands on a GPU, to draw, which is why it was laggy and slow.) While the high level UI libraries typically let you state things like "move the view 2 pixels to the right", OpenGL doesn't. You need to first draw whatever was behind the view in the gap that is created from moving the view. Then the new view needs to be drawn, with a rich combination of "draw texture", "set color and draw line", and so on. Things like shadows on views and the fact that any view might move at any time makes it difficult to cache draw results in an efficient manner. This is why it's usually faster to just reissue all the commands for every frame, 60 times per second..

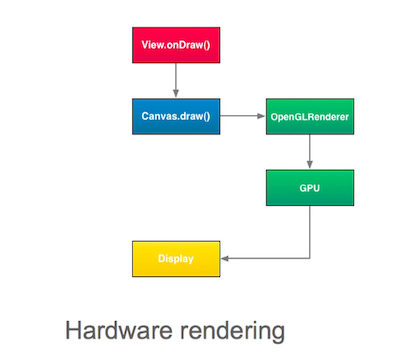

There are some things that are expensive, though: generating the list of OpenGL commands to call in the first place. Here's the flow in Android >= 3:

The draw() method of a View is a bunch of Java method calls. You can inherit from View and do it yourself. Things like Button has its own draw() implementation that has Java code to draw a texture, the text, etc. This essentially ends up being serialized to raw OpenGL commands, and cached. The draw() method is not called again until the view changes something that needs it to recalculate the OpenGL commands it needs to issue to draw itself. To even further improve the performance of rendering a view, these commands are merged into what OpenGL calls a display list. A display list is OpenGLs way to merge a bunch of commands into one command, and the specifications say it's up to the driver to optimize as much as it wants (convert three calls to create vertices into one call that creates a triangle, for example), and store the commands in graphics memory. The end result is that drawing a view means issuing one single Java method call, which is more than fast enough.

(Aside: this is one of the reasons "hardware accellerated" animation is fast. Most animation can be expressed as an OpenGL transformation matrix applied to an existing OpenGL draw list, which is much faster than a bunch of Java method calls and calculations and rasterization in software)

Here's the kicker: This is a great fit for a functional programming style. We can model the rendering of a UI in the following way: for each frame to render, take the view hierarchy as an argument, and return the rendered bitmap (texture). The actual rendering function will use the OpenGL state machine internally, but as the Clojure docs for transients say:

If a tree falls in the woods, does it make a sound?

If a pure function mutates some local data in order to produce an immutable return value, is that ok?

So even though a lot of OpenGL state machine goodness happens inside the function, the function itself ends up being completely pure, since it doesn't return any of the OpenGL mutable stuff to the outside world.

A new model

A user interface is best represented as a tree structure. A view has a list of child views, and so on. But does the tree structure have to be mutable? As we've seen, a functional process is highly applicable for user interface rendering, and functional programmers don't like mutable data that changes under their feet, requiring manual enforcement of object ownership policies, or locking, and so on. So let's see what we can do.

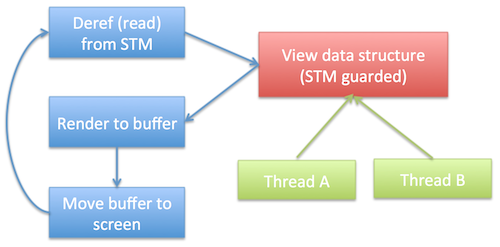

In the new model, we store the user interface as a persistent Clojure data structure. More on its structure later. This data structure is managed via the Clojure STM, so we can get a consistent perception of the world without locking or manually enforced ownership policies. Then, a renderer runs in a separate thread. 60 times a second (configurable, of course), it reads this data structure and renders it, using OpenGL. And voila, that's the rendering flow in concui!

You can create as many threads as you want in addition to this. Since you use the Clojure STM to update the UI tree structure, this is very hassle free and without the typical time complexities of a traditional mutable system. It's also not your problem to figure out how to use multiple threads and synchronize the state - Clojure handles all of this for you!

The actual data structure is private to the renderer. This is because manually maintaining a huge tree structure is a bit of a hassle, and the fact that we don't actually have a tree structure, but a collection of maps - one for all the views, one where the key is a view ID and the value is a list of child views for that view. This makes updates deep down the tree structure O(1). We provide a transaction based API of facts (very similar to that of Datomic) to 1) abstract away the internals of the data structure 2) make it more convenient to work with and 3) provide an API to consistently update the state of multiple views as a single atomic operation.

(def rdr (r/create-renderer))

;; Set the background color of the entire frame

@(r/transact rdr [[:renderer/bg-color (r/color 1 0 0)]])

;; Coordinated change of multiple views

@(r/transact rdr [[:view/attr my-view-id :pos-x 20]

[:view/attr other-view-id :pos-x 50]])I won't explain the details of what exactly my-view-id is; take a look at the code on Github if you want to know more.

But what we're left with is a persistent data structure representing the view, and a nice API on top of it to atomically update the UI state. There's no manual coordination of state required. Since both reads and writes are 100% atomic and consistent (again, thanks to the Clojure STM), you're guaranteed that the renderer won't render my-view-id at the new position 20 and other-view-id at its old position.

What it's not

This is by no means intended to be practical. You will almost certainly not use this library to create your next JVM (or Dalvik) based UI app. It's purely a research project. Since it has its own OpenGl based data structure, sharing existing UI components in an existing UI framework (like Android built in buttons and text views, and so on) is difficult.

If it turns out that concui provides a usable method for building user interfaces, and if we have 100 core machines in the next few years, we might start to see some vendors ship UI frameworks using similar approaches. But until then, you're better off using Pedestal, or any of the other functional UI libraries that tread the mutable DOM as I/O.

Also, concui is not a web framework. It runs on the JVM, powered by Clojure, issuing OpenGL commands to hardware. A Clojurescript + WebGL version could probably be made, but that's not a goal for me since the web is very single threaded of nature.

What's to come

One of the biggest unknowns is the lack of coordination between the view data structure and what's actually visible on the screen at the current time. You can at any time read the UI data structure, but you won't be able to know if that UI has actually rendered on the screen yet, since rendering happens on a separate thread. So interesting (and perhaps beneficial) side effects of uncoordinated timing will likely occur. Perhaps there will be some kind of coordination eventually. After all, all that's really needed is to keep the persistent data structure representing the view hierarchy at the time of rendering around, since Clojure provides superb time management through it's immutable values. But the existence of two data structures might cause more problems than it solves. Only time will tell. This is why I call concui a research project!

I'll be working on concui with the goal of submitting a proposal to Clojure/conj 2013 before the deadline in mid June. Hopefully I'll have something interesting before then, I'd love to show this to the Clojure crowd, and I have high hopes that this project will provide actual useful and unique research to the world of user interface programming.